Creating a Windows Server 2008 R2 Failover Cluster

I hear you…you want your SQL, DHCP, Hyper-V or other services to be highly available for your clients or your internal users. They can be if you create a Windows Failover Cluster and configure those services in the cluster. By doing that if one of the servers crashes the other(s) one will take over, and users will never even notice. There are two types of Failover Clusters: active/active and active/passive. In the first one (active/active) all the applications or services running on the cluster can access the same resources at the same time, and in the second one the applications or services running on the cluster can access resources only from one node, the other one(s) is/are in stand-by in case the active node is fails.

For now I just want to show you how to create an Active/Passive Windows Failover Cluster, as for the shared storage I will use iSCSI since I can’t get my hands on a SAN, here at home. The iSCSI target is from StarWinds, which is more than enough to create and test your Windows cluster, so if you want to follow along a trial version is available for download at this page. To run a Windows cluster an Active Directory domain needs to be present. For this guide all servers are running Windows Server 2008 R2 Enterprise. You need either Enterprise or Datacenter edition, because Standard edition does not support clustering. In the following table I wrote down the cluster nodes network configurations.

| Node1 | Node2 |

| Network 1 (LAN) – 192.168.50.10 | Network 1 (LAN) – 192.168.50.11 |

| Network 2 (iSCSI) – 10.0.0.10 | Network 2 (iSCSI) – 10.0.0.11 |

| Network 3 (Heartbeat) – 1.1.1.1 | Network 3(Heartbeat) – 1.1.1.2 |

| Domain member | Domain member |

I added a separate network card just for the iSCSI traffic, because I don’t want that traffic to get on my LAN and “hurt” the switches. I recommend you do the same if you put this on a production environment, if not, your LAN will suffer. Usually the Register this connection’s addresses in DNS box should be disabled on the adapter protocol for the iSCSI and Heartbeat network, but since these networks are completely separated from the LAN it’s OK to leave the box enabled, they are not going to register anywhere.

After you configured the IP addresses on every network adapter verify the order in which they are accessed. Go to Network Connections click Advanced > Advanced Settings and make sure that your LAN connection is the first one. If not click the up or down arrow to move the connection on top of the list. This is the network clients will use to connect to the services offered by the cluster.

Verify connectivity on every network on every cluster node, using PING.

If everything is in order let’s go and configure the iSCSI target; I’m not going to show you here how to install the software, because is straight forward, click Next a few times and you’re done. On the StarWind console right-click StarWind Servers and choose Add Host.

Type the IP address or FQDN of your StarWind server. Since my server is on the same box as the console I will just type the loopback address. Click OK to connect.

Once the StarWind server is added to the console, right-click it and choose Connect.

Type the credentials to connect to the server and hit OK. If you are using the default credentials, like I am here, on the Login box type root and on the Password box type starwind. You can modify this later, if you want to.

Once connected you should be able to see the Targets object in console.

Now we need to create the quorum disk so the cluster information sits somewhere and can be accessed by all nodes in the cluster. Right-click Targets and choose Add Target.

Give the target a name and hit Next.

As storage type, choose Hard Disk.

If you want to use a physical disk attached to your StarWind iSCSI server go with the first option, but for the simplicity of this example I’m going to create a virtual disk.

Select Image File device and click Next.

Choose the second option Create new virtual disk.

Provide the path where the virtual disk should sit, then type the size of the disk. Microsoft recommends that quorum disks should be at least 500 MB in size, but I alway set it at 1 GB. If you need more information read this Microsoft KB.

Here check the box Allow multiple concurrent iSCSI connection (clustering) then click Next.

Leave the default options here and continue the wizard.

On the Summary screen click Next to create the iSCSI virtual disk.

Here click Finish to close the wizard.

Now repeat the same steps to create a data disk, off course in a bigger size; mine has 10 GB. The disk size depends on the services that will run in the cluster. For example, if you are running a SQL server in the cluster you will need a disk bigger than 10 GB, and I’m sure you’re not going to need just one. Anyway at the end you should have all your disks listed in the StarWind console.

Now let’s take care of the cluster nodes, and we’ll start with the first one, Node1. To install the Failover Cluster service click Start > Administrative Tools > Server Manager.

Right click Features and choose Add Features.

Check the Failover Clustering box and hit Next then Install.

Repeat this operation on the second node. Back on Node1 we need to connect those iSCSI drives that we create earlier. For that go to Start > Administrative Tools > iSCSI Initiator. Click Yes on the message that pops-up to start the iSCSI service. On the target box type the IP address or FQDN of your iSCSI Target (the one where those virtual disks are sitting) then hit the Quick Connect button.

On the Quick Connect window select each discovered targets and click the Connect button.

Now those drives should be visible in the Disk Management console.

Put those disks online, initiate them, format them using NTFS and assign a drive letter. At the end they should look like this:

Do the same maneuver on the second node, but DO NOT PUT THE DISKS ONLINE; leave them off-line, because corruption can occur. Now from Administrative Tools open the Failover Cluster Manager. From the console right-click Failover Cluster Manager and choose Create a Cluster. Usually the cluster needs to be validated, but since I’ve done this a few times I know it will work.

In the Enter server name box type the name of your servers that participate in the cluster and click the Add button.

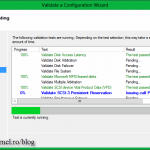

Leave the default option to validate the cluster and click Next. In the Validate a Cluster Wizard go with the defaults.

At the end you should see only green checks. If you have errors or warnings fix them before continuing.

Back to the Create Cluster Wizard we need to type a name for this cluster (give it a name that defines your service running on the cluster) and assign it an IP address. I recommend to use a static IP address and not one assigned by DHCP. When the wizard starts creating the cluster it also tries to create a computer account named after your cluster. If the account you are logged in with on the server does not have admin rights in Active Directory, you need to contact the AD team to create the computer account before you create the cluster.

Click Next to start creating the cluster.

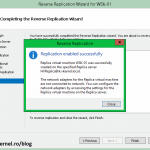

When it’s done click Finish to close the wizard.

If you take a look in AD, you should see the cluster computer account created by the Create Cluster Wizard. Again, this happened because I was logged in with a domain admin account when I ran the wizard.

We created the cluster, but some things need to be done after. Verify that the wizard assigned the correct drive to the quorum and only the LAN network adapter listens to clients. To verify the quorum drive click the Storage object in the console. Looks like the wizard was smart enough to know which drive to assign for the cluster quorum.

If you right-click the networks and choose Properties, only the LAN network should have the option Allow clients to connect trough this network enabled.

As a tweak, I like to rename my cluster networks based on their purpose.

To verify that it works, shut down Node1 (since this is the active node right now) and see if the quorum and data disks fail to the second node. Now you can start installing some cluster applications like SQL, DHCP etc…I’ll show you how in a future guide, ’till then… cheers.

Want content like this delivered right to your

email inbox?

Thanks so much for the best written and explained article.

Thanks for passing by.

I am late to the party but I had two slight issues following this guide.

The first was that I know you mention the trial for Starwinds, the free version should do what is needed except that I am setting this system up using ESXi to host my Windows Servers and the free version of Starwinds will not install on Windows Server running on a hypervisor. So the trial or paid for versions of Starwinds are the only way to go.

The second is that the failover cluster feature is only available on Windows Server 2008R2 Enterprise. You cannot install it on Standard.

I hope this helps anyone looking at this guide all these years later.

Hi,

First off all thanks for pointing that out. Looks like I missed putting what editions support clustering. I have updated the article.

If you have server 2012 you don’t need any third party iSCSI target software, server 2012 has one built in and is working really good, and is also compatible with ESXi.

Adrian,

Very nice article.Just a very basic question as i am new to this.Sorry if it is silly. What you have explained above is applicable for Windows 2008 Virtual servers also.Am i correct?

Yes it can be applied to virtual servers also, but…most of the times clusters are not made on virtual machines. This is because VMs are are running on an infrastructure that is already in a failover cluster(s) (the physical machines, also called hypervisors).

Excellent! A crystal clear way to do things. However, I followed and instead Starwind used Openfiler…. 🙂

very nice post! I hope you can also create the installation guide for active/active.

Thanks. I put it on the list 🙂

One of the best article i ever seen for failover clustering installation..Thanks!

Thanks. I’m glad you like it.

One of the best post!!! Nicely written with details, easy to understand.